The year 2025 has become a turning point in the evolution of scholarly publishing; artificial intelligence has begun to transition from experimental add-on to a central component of editorial workflows. In this year alone, journal editors, reviewers, and authors have been confronted with the demands of negotiating a rapidly transforming landscape, in which AI-driven systems increasingly determine the way manuscripts are screened, evaluated, improved, or even conceptualized.

Far from simply automating mundane tasks, the rise of AI tools has started to reshape the norms and expectations of scholarly communication, raising new questions about authenticity, authorship, and the future of peer review. And so, this is a moment to step back: what, precisely, have we learned from this year of accelerated AI adoption, and what does it portend for the integrity and inclusiveness of academic publishing?

AI IS A COMPASS, NOT THE CAPTAIN

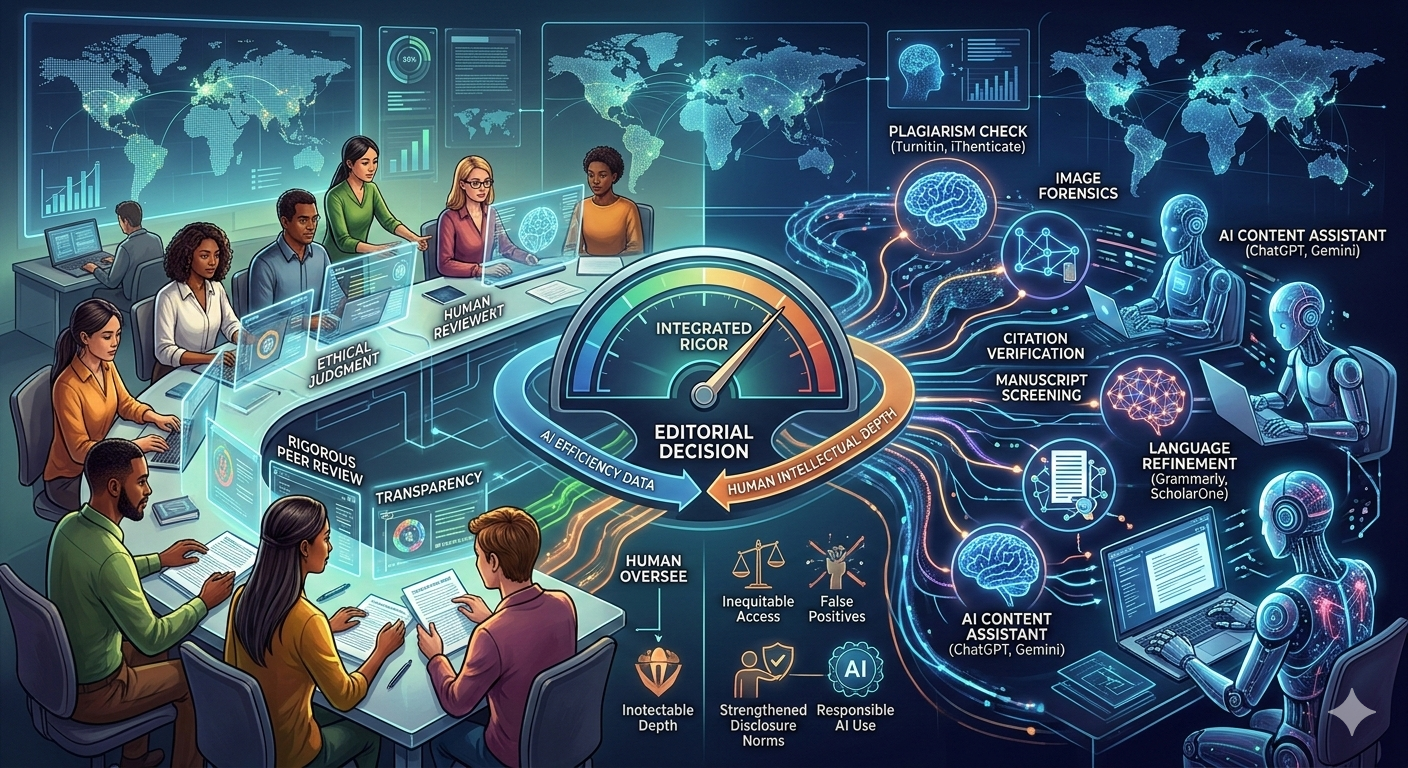

Much of the momentum in 2025 has come from the increasing sophistication of AI tools integrated directly into editorial pipelines. Manuscripts now go through automated linguistic checks, citation verifiers, data-screening modules, and image-integrity detectors long before they reach a human editor. These tools have not only reduced the burden of repetitive screening but also enhanced the consistency and pace at which manuscripts are handled. For many journals, especially those with small editorial teams, AI has ensured that faster triaging need not be compromised by initial checks for plagiarism, fabricated data, or manipulated visuals. Yet this efficiency comes with a critical lesson: AI assistance is only as reliable as the human oversight that accompanies it. Editors this year consistently reported that tools can flag false positives, misunderstand disciplinary nuance, or fail to catch more sophisticated forms of misconduct—proving that while AI fortifies editorial work, it cannot replace the judgment, skepticism, and contextual awareness of trained human reviewers. The most successful editorial teams in 2025 have thus adopted a hybrid approach, leveraging AI as a first-pass assistant while refining protocols that prioritize transparency and accountability in the final decisions.

For editors, the most visible shift in 2025 has been the rise of AI tools that quietly absorb much of the manual, repetitive work that once slowed down the editorial cycle. Language-assistance models such as ChatGPT or Gemini are now routinely used by authors to reframe arguments, refine clarity, and elevate academic tone—often narrowing the linguistic gap that previously disadvantaged non-native English speakers. AI-based grammar and structure enhancers, such as Grammarly or DeepL Write, help improve the readability of manuscripts before submission, thereby reducing the time editors spend on preliminary language corrections. On the editorial side, tools such as Turnitin’s AI-writing detection module, iThenticate’s similarity checker, and Proofig’s image forensics software have become integral to the first-stage screening process, enabling the detection of plagiarism, manipulated figures, or duplicated images within seconds. Workflow platforms like ScholarOne and Editorial Manager have also embedded automated triage systems that categorize manuscripts, check reference formatting, and flag missing ethical statements, allowing editors to focus on substantive decision-making rather than administrative checks.

These examples demonstrate how AI is no longer a futuristic promise, but a practical assistant that automates routine tasks, facilitates clearer communication, and enhances the integrity of submissions—all while underscoring the need for human oversight in final judgments.

While this has brought opportunities, it has also presented challenges. On the positive side, authors from non-English-speaking regions have found AI-based writing aids useful for improving clarity and structure, as well as reducing some of the linguistic barriers that have historically disadvantaged researchers from the Global South.

This year has also seen an increase in submissions exhibiting what might be described as AI-inflated writing: fluent but shallow, polished yet lacking in empirical depth. Journals have responded by tightening disclosure policies and educating authors about the responsible use of AI to reinforce the principle that AI should enhance, rather than replace, scholarly expression. Most important, 2025 has surfaced a deeper equity issue: while well-resourced publishers can integrate AI seamlessly, smaller journals—particularly those from the Global South—lack access, furthering the technological divide. This disparity raises a vital question about the future of academic publishing: will AI democratize knowledge production, or will it amplify existing inequalities? Reflections from this year suggest that the answer to this question depends on how the publishing community secures shared access, transparent governance, and inclusive participation in setting norms for AI.

A FUTURE WITH AI AND HUMAN, AND NOT AI OR HUMAN

A critical aspect to note about 2025 is that AI is no longer just a technological upgrade—it marks a structural shift in how scholarship is created, validated, and disseminated. It challenges long-held assumptions about authorship, introduces new competencies that editors must develop, and pushes the publishing ecosystem toward greater accountability. Yet the lesson from this year is not to resist this revolution but to shape it with intention and clarity. For journals, this means investing in AI literacy among editors and reviewers, establishing transparent governance frameworks, and encouraging authors to use AI tools responsibly and declare their use. At the same time, the global publishing community must work to narrow disparities in access to AI, ensuring that innovation does not exacerbate existing inequalities, particularly for researchers and journals in resource-constrained regions. AI is undeniably a transformative force, but using it strategically can create a genuine win-win scenario for both editors and authors. When treated as a compass, AI can reduce repetitive workload, refine language in manuscripts, and support clearer scholarly communication. But once it starts acting as the captain, the sail might get lost in the ocean of competition—drifting away from the stronger research ideas that only human intellect and inquiry can anchor. This year has shown us that the future of peer review and editorial work depends on striking a balance between technological efficiency and human judgment, ethical responsibility, and global equity. AI may be reshaping the machinery of publishing, but what underpins scholarly communication—a commitment to rigor, transparency, and fairness—remains, and must remain, firmly in our hands.

Sanjib Pohit is a Professor, and Sovini Mondal is a Research Associate at the National Council of Applied Economic Research. Views expressed here are personal.